Hello,

we bought some new RED60 and last night i saw this after a planned WAN1 outage:

2020:10:13-03:06:51 suerfw01-2 red2ctl[8806]: Overflow happened on reds4:0

2020:10:13-03:06:51 suerfw01-2 red2ctl[8806]: Missing keepalive from reds4:0, disabling peer *external WAN1 IP*

2020:10:13-03:06:51 suerfw01-2 red2ctl[8806]: Missing keepalive from reds4:1, disabling peer *external WAN2 LTE IP*

Our WAN2 LTE backup worked fine but in the past, these overflow issues caused a few bricked red50 as you might remember. What do we see here? Is this normal?

Also we had a second issue last week. All WLAN access points gone offline but the tunnel was up. The RED thinks it is online but it isn't. A gateway ping is possible but you will see zero traffic through the tunnel. Internet was also dead. No Log entries. Did anyone had this issue? I saw this this already the second time. The first time i contacted Sophos and they sent me a new one. Now it behaves the same.

WAN1 is a deutsche Telekom 100mbit symmetric business connection. Im tired of creating tickets. 4 times i contacted my local ISP and all Sophos can do is sending new devices. You can imagne how much work this is causing already. I have a second deactivated backup red50 in the rack, which i choose if the red60 dies again. Normally it is possible to switch back to the 60 after some time or hard resetting the red60. Problem: The red50 is also not very stable (goes down under load). This drives me crazy.

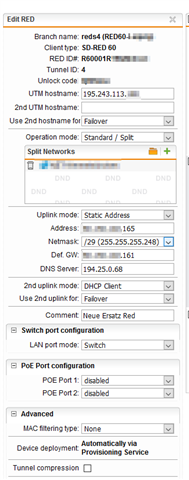

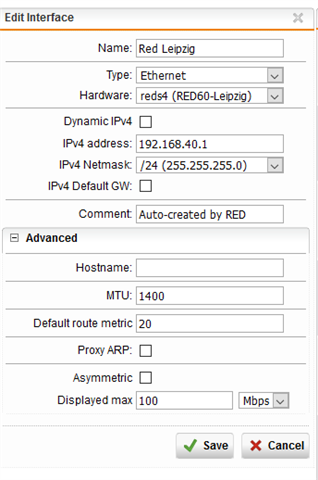

Configuration:

Any ideas?

Sorry for my english

Tim

This thread was automatically locked due to age.