I need to pick the brain of the experts here, as my knowledge is exhausted at the moment.

Situation:

- UTM in a datacenter, connected to a 1Gbps uplink, with 40Mbps 95% percentile bandwidth on it. Excess bandwidth usage is charged at a pretty steep rate.

- Webserver in a DMZ VLAN, that provides software updates (ipk packages) to devices running embedded Linux.

- Device owners worldwide seem to have decided to "all" run their updates every day in the same 60 minute window.

- This causes spikes in outgoing bandwidth of over 200Mbps, leading to a hefty bandwidth charge at the end of the month.

I've been asked to do something about this, i.e. "flatten" the spike, so that (1) the charge can be avoided but (2) not impede the downloads too much.

I currently have a bandwidth trottle entry defined on the inside VLAN interface: "server-ip:80 -> internet:1-65535", shared 50Mbps. This works for (1), but not for (2), as new connections can't get through when the 50Mbps is reached. This not only causes errors on the embedded devices, but also there external service monitoring can't get through, goes berzerk and SMS's their server admin out of bed.

A closer look shows that this is implemented by an iptables bandwidth policy, which simply drops without shaping/equalizing. So that doesn't work.

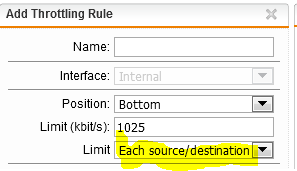

I have tried to replace it with a bandwidth pool definition on the outside interface. This works when I define "any:80 -> internet:1-65535", but not when I define "server-ip:80 -> internet:1-65535" which leads me to believe this runs after NAT, so you can't define a selector on an internal IP anymore. Is this correct? As there are more webservices in that and other DMZ's, a pool on all outgoing HTTP traffic won't work.

Are there any other options that I can try to archieve this?

p.s. FYI, this is not a paid job for me, client is an "open source development community" that survives on donations, I provide network/security support to them for free. ;-)

This thread was automatically locked due to age.