I've got a large number of UTM devices at sites with dual ISPs and we're trying to resolve a 'best practices' question.

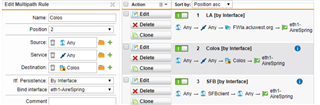

We typically have both ISPs active with multipathing / weights set up to put our 'priority' traffic (VOIP and RED Tunnels) on the better ISP, and everything else on the secondary. This works great until the primary fails, at which point the tunnels fail over to the secondary. That's not a problem, except that when the primary comes back up, the tunnels never fail back to the primary interface on their own. They can sit on the secondary (weaker) connection for hours, days, or weeks until we manually deactivate and reactivate them.

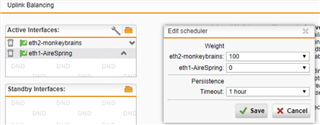

We're considering going to an Active / Standby setup with dual ISPs to address this issue, however in that configuration, our PRTG service can't properly monitor the backup connection (since it's essentially off).

For those of you on dual ISP setups:

1) How do you make sure RED tunnels (or whatever tunnels) fail back to a primary interface when an outage is resolved?

2) If you're running Active / Standby instead of multipathing, how do you monitor your standby ISP?

Thanks for the guidance.

This thread was automatically locked due to age.